As AI inference rapidly becomes one of the largest drivers of electricity demand, a new class of infrastructure is emerging to better align compute workloads with energy availability.

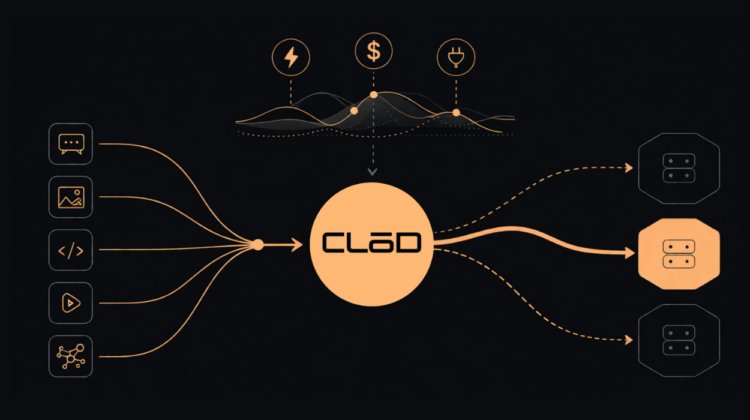

Vancouver’s LōD Technologies this week announced the launch of CLōD, a compute flexibility platform designed specifically for AI inference. The company positions it as the first production system capable of dynamically routing inference workloads based on real-time electricity prices and grid conditions.

The shift comes as inference workloads—fueled by the rise of AI agents, new applications, and so-called “vibe coding”—begin to outpace training in ongoing energy consumption. That trend is forcing operators and infrastructure providers to rethink how and where compute is deployed.

CLōD introduces a model that applies energy market dynamics directly to compute orchestration. Rather than curtailing operations during grid events, the platform adjusts where inference workloads run based on price signals, effectively treating compute as a flexible, grid-responsive resource.

“Electric grids rely on price signals to balance supply and demand,” said Medi Naseri, CEO of LōD Technologies. “CLōD extends that same mechanism to AI compute. When the grid sends a signal, workloads can respond automatically without disrupting applications or end users.”

Built on LōD’s energy-aware orchestration technology, the platform operates at the application layer, dynamically pricing inference tokens based on real-time electricity costs, grid constraints, and demand response programs. Workloads are then routed to regions where energy is cheaper or more abundant.

This approach contrasts with traditional data centre flexibility strategies, which have largely relied on throttling or curtailing workloads—often creating operational risk and potential conflicts with service-level agreements.

By shifting flexibility to routing and pricing, LōD claims performance impacts remain minimal. Early deployments have shown latency increases of roughly 50 milliseconds, while offering cost reductions of up to 60% compared to standard market rates.

The company says adoption has been immediate. Within days of launch, CLōD has processed billions of inference tokens and attracted more than 1,500 developers, signaling early demand for more energy-aware compute infrastructure.

For data centre operators, the implications are significant. Platforms like CLōD point toward a future where compute is not only optimized for performance and cost, but also actively participates in grid balancing—turning large-scale AI infrastructure from a growing strain on energy systems into a potential asset.

The launch marks a step toward what LōD describes as “grid-interactive computing,” where the economics of AI workloads are directly linked to the dynamics of electricity markets—an idea that is quickly gaining relevance as Canada and other regions scale both AI capacity and power infrastructure in parallel.